Alignment

Proofreading in accordance with DQF-MQM

The increasing diversity of requirements placed on the contents of translated texts has resulted in greater complexity when it comes to measuring translation quality. In every genre, both the writer and reader have differing expectations of a text.

On top of that, every text type has its own specific style.

For example, operating instructions should be written in language that is as neutral as possible, whereas a press release would provide a much more evaluative presentation of the same product. Numerous qualities and features could be added to the list of characteristics associated with each text type.

An assessment of whether a text and its translation meet the set quality requirements is much more representative if certain criteria agreed upon in advance are taken into account.

The Dynamic Quality Framework (DQF) addresses precisely this need. It provides an opportunity for measuring the quality of translations and similar language services in a diversified and customer-specific framework.

Specified categories create a standard that is consistent, applicable across various industries and therefore comparable.

The Dynamic Quality Framework (DQF) was developed by the TAUS User Group and is intended to react flexibly and dynamically to various factors in the quality assessment and measurement process.

This includes, for example, constant changes in market and customer requirements as well as rapid technological progress in the industry, for example in the field of machine translation. In order to turn these diverse requirements into a simple and useable metric, the DQF was expanded with the Multidimensional Quality Metrics (MQM) from the German Research Center for Artificial Intelligence (Deutsches Forschungszentrum für Künstliche Intelligenz: DFKI). This DQF-MQM metric, created in collaboration, forms part of the QT21 project and was supported by the European Union.

When conducting a review in accordance with the DQF-MQM metric, the document is not only checked for linguistic quality alone – the focus is above all on the relationship and expectation between the translated contents and the target group or target market. In collaboration with the customer, this comprehensive metric is tailored to their specific requirements and flexibly adjusted to changes.

Thanks to this flexible approach, proofreading according to the DQF-MQM framework is recommended above all for text types that are often met with customer feedback or market responses and in which the text’s functionality in the target group is the main priority.

Are you interested in proofreading in accordance with the DQF framework for your next translation and do you need more information? Contact us by telephone on +49 (0) 221 9259860 or email at tsd@tsd-int.com.

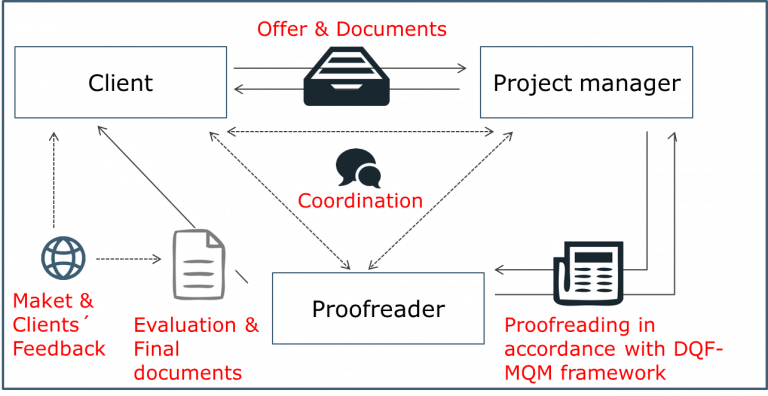

Standard workflow

- The project manager analyses the documents using the TMS and sends the customer a quotation, alongside an indication of the estimated processing time.

- Once the offer has been confirmed, the project manager discusses any preferences with the customer and the project is assigned to the proofreader, who carries out the proofreading in accordance with the DQF-MQM framework.

- The results of the proofreading are recorded in a separate document, which is made available to the customer for every translation or in a dashboard.

- Customer feedback.

Checklist